March 3, 2026 by Editor |

March 3, 2026 by Editor |

Broadcasting a multinational corporation’s all-hands meeting, product launch, or critical investor update from a central business district headquarters presents a unique and formidable set of technical challenges. The very density that makes a CBD a hub of commerce creates significant hurdles for high-quality video transport, including congested networks and physical infrastructure limitations. For enterprise clients, the expectation is flawless, broadcast-grade delivery to a global audience, with no tolerance for failure. Achieving this requires a meticulously engineered workflow that goes far beyond simple web-conferencing solutions. It demands a deep understanding of signal acquisition, resilient transport protocols, and scalable cloud distribution architecture. This is not about pushing a stream to a public platform; it is about creating a secure, reliable, and high-quality extension of the corporate environment to stakeholders across the globe, ensuring the message is delivered with the same impact as being in the room.

The First Mile Challenge: Signal Integrity from the Corporate HQ

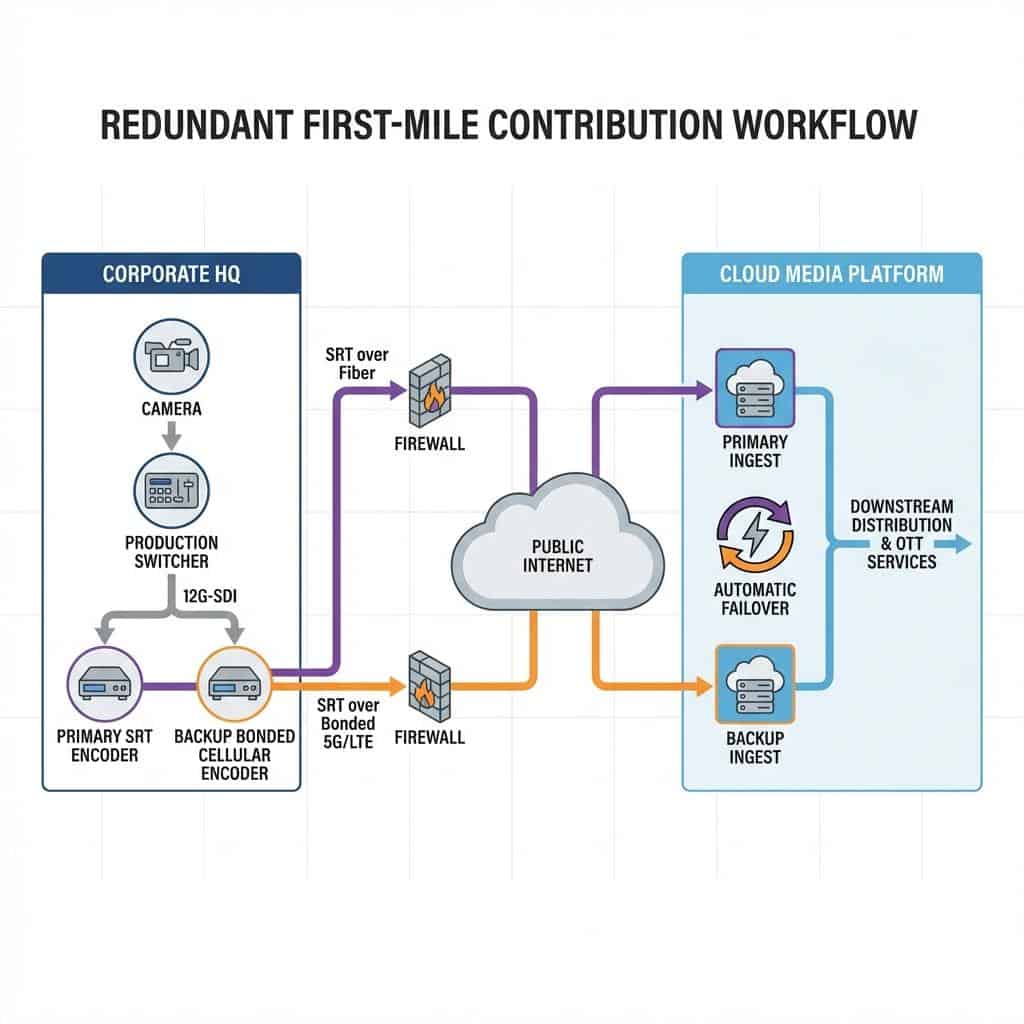

The most critical and vulnerable part of any global broadcast is the “first mile”: the process of capturing, producing, and transporting the primary audio and video signals from the event location to the cloud ingest point. Failure here means the entire broadcast fails. In a dense CBD environment, this requires a robust on-premises production setup and an intelligent strategy for egress over potentially contested networks.

Signal Acquisition and On-Site Routing

The foundation of any professional broadcast is pristine signal acquisition. This begins with broadcast-quality cameras, typically utilizing 4K/UHD sensors and outputting signals via 12G-SDI (Serial Digital Interface) to preserve maximum image fidelity. This uncompressed signal provides the raw data necessary for a high-quality final product. Inside the venue, a central production switcher, such as a Blackmagic Design ATEM Constellation or Ross Video Carbonite, becomes the heart of the operation. All video sources, including cameras, presentation laptops, and graphics systems, are routed into the switcher via SDI. The technical director cuts the program feed in real-time, creating the final output that will be sent to the world. For more complex routing within a corporate campus or large venue, IP-based workflows using NDI (Network Device Interface) offer tremendous flexibility. Full NDI can carry a visually lossless, low-latency video signal over a standard 10GbE network, while variants like NDI|HX provide a more compressed option suitable for 1GbE infrastructure, allowing production sources to be patched and routed with the flexibility of a data network rather than rigid physical cables.

Contribution Encoding and Protocol Selection

Once the final program feed is produced, it must be compressed (encoded) and sent over the public internet. This contribution feed is distinct from the final distribution feeds that viewers watch. For years, RTMP (Real-Time Messaging Protocol) was the standard. However, its reliance on TCP (Transmission Control Protocol) makes it highly susceptible to packet loss, which can cause buffering and stream failure on unstable networks. While RTMPS adds a necessary layer of security, it does not solve the underlying transport problem. The modern broadcast standard for contribution is SRT (Secure Reliable Transport). SRT is a UDP-based protocol that includes an intelligent ARQ (Automatic Repeat reQuest) mechanism to recover lost packets, mitigating the effects of jitter and bandwidth fluctuations common on public internet links. This makes it exceptionally well-suited for pushing a high-quality feed out of a CBD location. An enterprise-grade hardware encoder will take the SDI program feed and encode it using a codec like H.264 or the more efficient H.265 (HEVC), typically at a bitrate between 10 to 20 Mbps for a pristine 1080p60 HD signal, before wrapping it in the SRT protocol for transport.

Architecting Resilient Global Distribution Networks

With a stable, high-quality contribution feed successfully transmitted from the CBD hub, the next stage involves processing and distributing this signal to a global audience with minimal latency and maximum reliability. This is accomplished through a sophisticated cloud-based media workflow, leveraging transcoding services and a global Content Delivery Network (CDN) to handle viewership at scale.

Cloud-Based Transcoding and Playout

The single SRT contribution feed arrives at a primary cloud ingest point, typically hosted on a major cloud platform like AWS, Google Cloud, or Azure, or through a specialized media service provider. This is where the signal is prepared for mass distribution. A cloud transcoding engine, such as AWS Elemental MediaLive, takes the high-bitrate contribution feed and creates multiple lower-bitrate versions in real-time. This process, known as creating an adaptive bitrate (ABR) ladder, ensures that viewers with varying internet speeds receive the best possible quality without buffering. A typical ABR ladder might include renditions at 1080p, 720p, 540p, and 360p. These renditions are then packaged into modern HTTP-based streaming formats like HLS (HTTP Live Streaming) for Apple devices and DASH (Dynamic Adaptive Streaming over HTTP) for others. This packaging segments the video into small chunks that can be delivered efficiently over standard web servers.

Leveraging a Global Content Delivery Network (CDN)

Distributing the HLS and DASH packages to thousands or tens of thousands of viewers simultaneously is the role of a CDN. A CDN is a vast, geographically distributed network of edge servers. When a viewer in London requests the video, the CDN serves the video chunks from a local server in Europe, not from the origin server in North America. This drastically reduces the Time to First Byte (TTFB) and minimizes latency, resulting in a fast-starting, smooth viewing experience. For enterprise broadcasts, using a CDN with robust security features is non-negotiable. Features like token-based authentication prevent unauthorized sharing of the stream link, while geo-blocking can restrict viewing to specific countries or regions, ensuring sensitive corporate communications remain within the intended audience.

Integrating Hybrid Event Production and Enterprise Platforms

Modern corporate events are rarely just for a physical audience. They are complex hybrid productions that must seamlessly merge the in-room experience with a parallel, engaging experience for the remote audience, often integrating with the very Unified Communications (UC) platforms that enterprises use daily.

Managing Complex Audio for Hybrid Audiences

Audio is arguably more critical than video, and in a hybrid setting, its complexity multiplies. There must be two distinct audio programs: one for the in-room PA system and a separate, carefully crafted mix for the broadcast audience. A critical component of this is creating a “mix-minus” for any remote presenters or participants. This means sending them a full program mix of the event audio, *minus* their own microphone audio, to prevent them from hearing a delayed echo of their own voice, which is highly disorienting. Professional audio consoles route these complex mixes, often leveraging IP audio protocols like Dante or AES67 to send multiple channels of audio over the same network infrastructure used for video, providing immense flexibility in routing and control.

Bridging Broadcast Workflows with Unified Communications Platforms

A key challenge in hybrid events is bringing remote speakers from platforms like Microsoft Teams, Zoom, or Webex into the main broadcast production with high quality. Simply screen-capturing a laptop is not a professional solution. Instead, dedicated hardware and software solutions are used to extract clean, isolated video and audio feeds (known as ISOs) from the UC platform. These feeds are then converted into a broadcast-standard signal, like SDI or NDI, allowing the technical director to switch to the remote speaker just as they would any other camera in the room. This integration also requires managing return feeds. The remote presenter needs to see the main program video and hear the event audio to feel connected. This return signal must be delivered with the lowest possible latency to enable natural interaction with the in-room presenters and audience, requiring a carefully managed signal flow from the production switcher back to the UC platform.

Ensuring Broadcast-Grade Redundancy and Monitoring

For any mission-critical corporate broadcast, failure is not an option. This necessitates a comprehensive redundancy strategy that covers every potential point of failure, from the on-site camera to the final delivery CDN. This is coupled with rigorous real-time monitoring to detect and resolve issues before they impact the viewing audience.

Implementing Failover Paths and Redundancy

Redundancy begins at the source. Critical presentations may use an A/B system with two identical cameras and microphones. This extends to the production core, with redundant switchers and power supplies. The most critical area for redundancy is the internet egress path. Relying solely on the building’s primary fiber internet is a significant risk. A common strategy is to use a secondary, diverse path. Bonded cellular technology, from providers like LiveU or TVU Networks, is an excellent solution. A bonded cellular unit aggregates bandwidth from multiple cellular carriers (e.g., AT&T, Verizon, T-Mobile) into a single, robust internet connection. The primary SRT feed can be sent over the fiber line, while a synchronized, fully redundant feed is sent over the bonded cellular path to a secondary cloud ingest point. If the primary path fails, the cloud infrastructure can switch to the backup feed seamlessly. This redundancy can be extended with multi-region cloud deployments and even multi-CDN strategies to protect against platform-level outages.

Real-Time Monitoring and Quality of Service (QoS)

Throughout the broadcast, a team of engineers must monitor the entire signal chain. This involves more than just watching the video. Specialized tools are used to monitor the health of the SRT contribution feeds, tracking metrics like latency, packet loss percentage, and available bandwidth in real-time. Waveform monitors and vectorscopes are used to ensure video signals are within legal broadcast limits and that colors are accurate. Audio engineers monitor levels using LUFS (Loudness Units Full Scale) meters to comply with broadcast loudness standards and ensure a consistent experience for all viewers. Dedicated QoS platforms provide a global view of CDN performance, tracking metrics like buffering rates and startup times across different regions, allowing engineers to proactively manage the viewer experience.

Ultimately, transforming a corporate message from a CBD headquarters into a seamless global broadcast is an exercise in precision engineering. It requires a holistic approach that balances on-site production excellence with a resilient and scalable cloud architecture. By leveraging professional-grade equipment, modern transport protocols like SRT, and a multi-layered redundancy strategy, MNCs can execute flawless, high-impact global events that connect and engage audiences anywhere in the world.