March 11, 2026 by Editor |

March 11, 2026 by Editor |

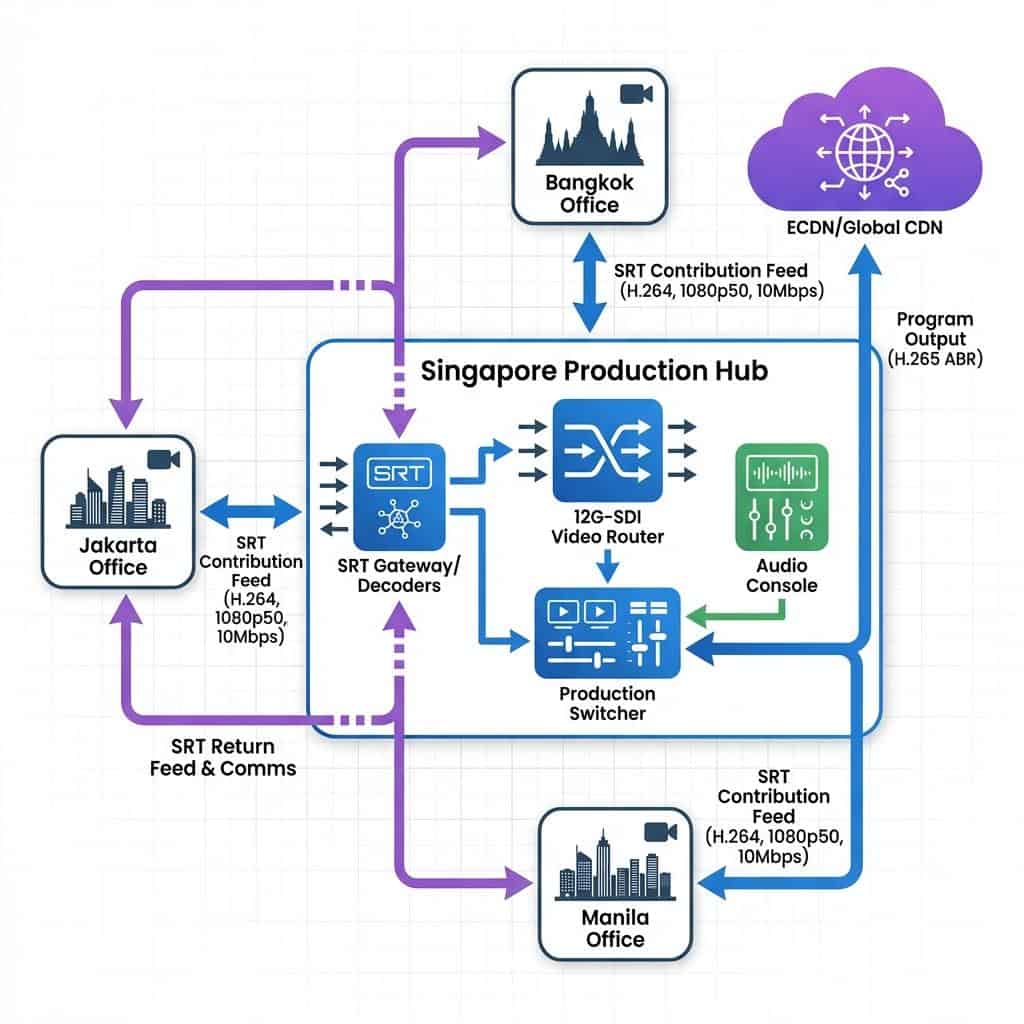

Regional Leadership: Connecting ASEAN Offices via Singaporean Stream Hubs

For multinational corporations operating across the Association of Southeast Asian Nations (ASEAN), executing cohesive and technically flawless internal events like all-hands meetings, leadership summits, and critical training sessions presents a significant logistical and technical challenge. The geographical distribution of offices, coupled with variable network infrastructure across the region, can compromise the quality and reliability of real-time video communications. The conventional approach of deploying full-scale production teams to each location is cost-prohibitive and introduces inconsistencies. A more advanced, centralized architecture is required. This article details the technical framework for establishing a Singapore-based production and streaming hub, a model that leverages the nation’s superior network infrastructure to ingest, produce, and distribute high-value corporate events for a regional audience.

Architecting the Singaporean Hub: Core Infrastructure and Signal Ingest

The fundamental principle of a streaming hub is to centralize the most complex and resource-intensive aspects of live production: switching, graphics, audio mixing, and encoding. Instead of decentralized, variable-quality productions, remote ASEAN offices contribute high-quality, single-camera feeds to the Singapore hub, where a master control room (MCR) produces a polished, broadcast-grade program. This hub-and-spoke topology hinges on robust signal ingest and processing capabilities.

On-Premise vs. Cloud-Based Hub Deployment

The initial architectural decision is whether to build the hub using on-premise hardware or cloud-based services. An on-premise hub, built within a data center or a dedicated facility in Singapore, offers maximum control over the signal chain and unparalleled security. It relies on dedicated broadcast hardware such as Serial Digital Interface (SDI) routers (e.g., Blackmagic Design Videohub, AJA KUMO) for baseband video, dedicated hardware encoders, and physical production switchers. This approach is ideal for organizations with stringent data sovereignty requirements and the need for sub-frame latency within the MCR. Conversely, a cloud-based hub using services like AWS Elemental MediaConnect or Zixi ZEN Master offers rapid scalability and operational flexibility without significant capital expenditure on hardware. Virtualized production tools like vMix or Grass Valley AMPP can run on cloud instances, processing incoming IP video streams. A hybrid model is often the most effective solution, utilizing an on-premise MCR for primary production while leveraging the cloud for overflow capacity, disaster recovery, and global distribution.

Contribution Protocols: SRT as the Regional Standard

The reliability of the entire system depends on the protocol used to transport video feeds from remote offices in Jakarta, Kuala Lumpur, or Ho Chi Minh City across the public internet to Singapore. While Real-Time Messaging Protocol (RTMP) has been a common standard, it is not suitable for professional contribution due to its TCP-based nature, which can introduce significant latency when packet loss occurs. The superior choice is Secure Reliable Transport (SRT). SRT is an open-source protocol that provides the reliability of TCP with the low latency of UDP. It excels at handling network jitter and packet loss through advanced error correction mechanisms (ARQ), making it the definitive standard for professional contribution over unpredictable networks. For a typical 1080p50 feed, an SRT stream can be configured for a bitrate of 8-10 Mbps using H.264 compression, delivering a broadcast-quality signal with a latency configured to approximately four times the round-trip time (RTT) between the remote office and the Singapore hub. Within the hub itself, for local IP routing between devices, Network Device Interface (NDI) offers a high-quality, low-latency solution for connecting cameras, switchers, and graphics systems over a standard 1GbE or 10GbE network.

Signal Ingest, Decoding, and Routing

Once the SRT feeds arrive at the Singapore hub’s public IP address, they must be decoded back to baseband video (SDI) or another usable IP format (like NDI or SMPTE 2110). This is handled by dedicated SRT gateways or decoders, such as the Haivision Makito X4 or Kiloview hardware decoders. A bank of these decoders receives the incoming streams, converting each into a 12G-SDI or 3G-SDI signal. These SDI outputs are then fed into a central video router. The router acts as the core of the facility, allowing any incoming source to be patched to any destination, such as the inputs of the main production switcher, multiview monitors, or recording decks for isolated (ISO) recording of each feed. This centralized routing provides immense flexibility and allows engineers to manage and monitor all incoming signals from a single point of control.

Centralized Production Workflow and Remote Operation

With all video and audio sources successfully ingested and routed within the Singapore hub, the production team can execute a seamless, multi-camera broadcast. This centralized model ensures brand consistency, uniform graphical elements, and superior audio quality, regardless of the origin of the contribution feed. The key is to manage the complexity of multiple remote sources as if they were local studio cameras.

Master Control Room (MCR) Design and Operation

The MCR is the operational heart of the hub. It is designed for a technical director, audio engineer, and graphics operator to work in concert. A production switcher, like a Ross Video Carbonite or NewTek TriCaster 2 Elite, serves as the primary creative tool for cutting between remote presenters, playing back video content, and integrating graphics. Multiview monitors are critical, displaying all incoming SRT feeds, program and preview outputs from the switcher, and technical monitoring scopes. The ability to see every source simultaneously is non-negotiable for professional production. Color grading and matching between cameras from different locations can be performed here using upstream color correctors or tools within the switcher to ensure a consistent look across all feeds.

Managing Audio Complexity and Multilingual Support

Audio is arguably more critical than video in a corporate setting. Each remote location will contribute at least one audio source. These audio signals, embedded in the SRT stream, are de-embedded at the hub and fed into a digital audio console (e.g., a Yamaha QL Series or Behringer Wing). The audio engineer is responsible for creating a clean final program mix, ensuring levels are consistent, and applying equalization and dynamics processing. For events with remote Q&A or panel discussions, a mix-minus feed must be generated for each remote contributor. This is a custom audio mix that contains the main program audio minus the contributor’s own microphone, preventing them from hearing a delayed echo of their own voice. For multilingual events, multiple audio busses can be used to create separate language mixes, which are then embedded as distinct audio channels into the final distribution feed.

Standardized Remote Contribution Kits

To ensure technical quality and consistency from the remote ASEAN offices, standardized “flypacks” or contribution kits are essential. These kits are pre-configured and shipped to the remote locations. A typical kit includes a professional camera (e.g., Sony FX6, Canon C200) capable of outputting a clean SDI or HDMI signal, a hardware SRT encoder (like a Kiloview E2 or AJA HELO Plus), a high-quality microphone (e.g., Sennheiser MKH 416), a key light and fill light, and a small monitor to show the program return feed. The encoder is pre-programmed with the Singapore hub’s IP address and SRT credentials, making setup for local staff a simple plug-and-play process. A robust talkback system, either embedded in the SRT stream or running over a separate IP-based system like Unity Intercom, is also included for real-time communication between the MCR director and the remote presenter.

Distribution, Redundancy, and Enterprise Integration

A successful production is only complete once the final program is delivered reliably to the intended audience, whether they are internal employees on a corporate network or external stakeholders. The Singapore hub is perfectly positioned to manage this complex distribution and integration phase.

Multi-Platform Distribution and ECDN Integration

From the production switcher’s program output, the final mixed feed is sent to a bank of distribution encoders. These encoders create an adaptive bitrate (ABR) ladder. This involves encoding the source into multiple simultaneous streams at different resolutions and bitrates (e.g., 1080p at 6 Mbps, 720p at 3 Mbps, 360p at 800 kbps). This ABR package is then published via RTMP or SRT to a Content Delivery Network (CDN). For internal corporate viewers, this CDN is often an Enterprise CDN (ECDN) like Kollective, Ramp, or Hive, which optimizes video delivery within the corporate WAN to prevent network saturation. For public-facing events, the feed is sent to a global CDN like Akamai or Cloudflare for broad distribution.

Integrating with Corporate Platforms: Teams, Zoom, and Webex

Many hybrid events require the high production value of the main broadcast to be integrated into familiar collaboration platforms. The professional program output from the MCR (typically an SDI signal) can be fed into a capture device (e.g., a Blackmagic Design Web Presenter 4K or Magewell USB Capture Gen 2). This device converts the SDI signal into a UVC (USB Video Class) source, making the entire broadcast production appear as a simple webcam within a Microsoft Teams, Zoom, or Webex meeting. This allows remote employees to participate through their standard collaboration tool while viewing a professionally produced broadcast, complete with graphics, lower thirds, and multiple camera angles, rather than a single low-quality webcam feed.

Building Mission-Critical Redundancy

For high-stakes B2B events, failure is not an option. Redundancy must be engineered into every layer of the workflow. At the remote sites, bonded cellular technology (e.g., LiveU, Pepwave) can be used to combine multiple cellular networks and a wired internet connection into a single, highly resilient connection for the SRT feed. Within the Singapore hub, all critical equipment, including routers, switchers, and power supplies, should be fully redundant (1+1 or N+1 configuration). Two separate SRT decoders should receive the same stream over diverse network paths. The final distribution should be sent to two geographically separate CDN origins. This multi-layered approach to redundancy ensures that no single point of failure can disrupt the entire event, providing the resilience and peace of mind required for enterprise-level productions.