March 9, 2026 by Editor |

March 9, 2026 by Editor |

The Boardroom Broadcast: Professional Grade Audio for Sensitive Director Meetings

In the context of high-stakes director meetings and sensitive corporate communications, audio is not merely a component; it is the foundation of comprehension, decision-making, and security. The transition to hybrid meeting models has amplified the complexity, demanding a broadcast-level approach to audio engineering that standard unified communications (UC) solutions are ill-equipped to provide. A single instance of unintelligible speech, distracting echo, or a compromised signal can undermine the integrity of the entire proceeding. Therefore, designing an audio infrastructure for such an environment requires a meticulous, multi-layered strategy that encompasses room acoustics, signal chain integrity, robust network architecture, and encrypted transmission protocols. This is the domain of professional broadcast engineering, applied to the unique security and reliability requirements of the enterprise boardroom.

The core challenge lies in ensuring that every participant, whether physically present or connecting remotely from anywhere in the world, experiences flawless speech intelligibility. This involves managing a complex interplay of acoustic physics and digital signal processing. We must move beyond the simple paradigm of placing a microphone on a table. Instead, we must architect a complete audio ecosystem that actively mitigates environmental noise, eliminates feedback and echo for remote participants through sophisticated mix-minus configurations, and secures the entire signal path from microphone capsule to the remote listener’s ear. This process involves a deep understanding of gain structure, Acoustic Echo Cancellation (AEC), audio-over-IP (AoIP) networking, and secure streaming protocols like Secure Reliable Transport (SRT).

Foundational Acoustics and Microphone Architecture

The first and most critical phase of designing a professional boardroom audio system begins with the physical environment. No amount of advanced digital processing can fully compensate for a fundamentally flawed acoustic space. An audio signal is only as clean as its source capture, and in a boardroom, the room itself is an active part of that source. Overlooking acoustic treatment and microphone selection introduces noise and ambiguity at the very start of the signal chain, which will only be amplified downstream.

Acoustic Treatment and Room Analysis

Before any equipment is specified, a thorough analysis of the room’s acoustic properties is necessary. Key metrics include the reverberation time (RT60), which measures how long it takes for a sound to decay by 60 decibels. In a boardroom, a high RT60 value results in “roomy,” indistinct audio where syllables blur together, severely reducing speech intelligibility. The ideal RT60 for a space focused on speech is typically between 0.4 and 0.6 seconds. Achieving this often requires the strategic installation of acoustic treatment, such as broadband absorption panels on walls and ceilings to control reflections, and bass traps to manage low-frequency buildup. Furthermore, the room’s Noise Criteria (NC) rating, which quantifies the self-noise from systems like HVAC, must be assessed. An NC rating of 25-30 is considered acceptable for a high-performance meeting space, ensuring that the noise floor is low enough for microphones to capture clean speech without excessive ambient hiss or rumble.

Strategic Microphone Selection and Placement

With an acoustically optimized environment, the focus shifts to microphone technology. The goal is to achieve a high direct-to-reverberant sound ratio, meaning the microphone captures more of the speaker’s direct voice and less of the room’s reflected sound. For sensitive board meetings, three primary types of microphones are professionally deployed. Gooseneck condenser microphones, with their tight cardioid or supercardioid pickup patterns, provide excellent off-axis rejection, isolating the intended speaker’s voice. However, their visibility can be a concern. Boundary microphones, placed flat on the conference table, use the surface to create a hemispherical pickup pattern, but can also capture unwanted table noise like paper shuffling or keyboard clicks. The most advanced solution, and often the most appropriate for high-level meetings, are ceiling-mounted microphone arrays. Devices like the Shure MXA920 or Sennheiser TCC2 utilize beamforming technology. They use an array of internal microphone capsules and sophisticated Digital Signal Processing (DSP) to create steerable lobes, or pickup zones, that can be precisely aimed at seating positions. This technology actively tracks a speaker’s voice while rejecting ambient noise from other areas, delivering exceptionally clean audio with minimal visual intrusion.

Gain Structure and Pre-Amplification

Proper gain structure is fundamental to audio engineering. It refers to the process of setting the signal level optimally at each stage of the audio chain. The process begins at the microphone pre-amplifier. The goal is to raise the low-level microphone signal to a healthy line level, maximizing the signal-to-noise ratio (SNR) without introducing distortion or clipping. In a modern AoIP system, this pre-amplification often occurs in a dedicated network interface or within the primary DSP. Setting gain too low forces downstream devices to add excessive gain, raising the noise floor. Setting it too high will cause digital clipping, an unrecoverable form of distortion. A professional setup ensures that nominal speaking levels average around -18 to -20 dBFS (Decibels Full Scale) in the digital domain, providing ample headroom to handle louder speech without clipping.

The Secure Digital Audio Signal Chain

Once the acoustic and capture stages are optimized, the audio signal enters a fully digital workflow. This is where the signal is processed, routed, and secured. In a professional boardroom environment, the entire signal chain must be treated as a secure, mission-critical network, protecting sensitive conversations from both internal and external threats while ensuring technical perfection in the audio processing itself. This requires a robust architecture built on enterprise-grade components and protocols.

The Role of the Digital Signal Processor (DSP)

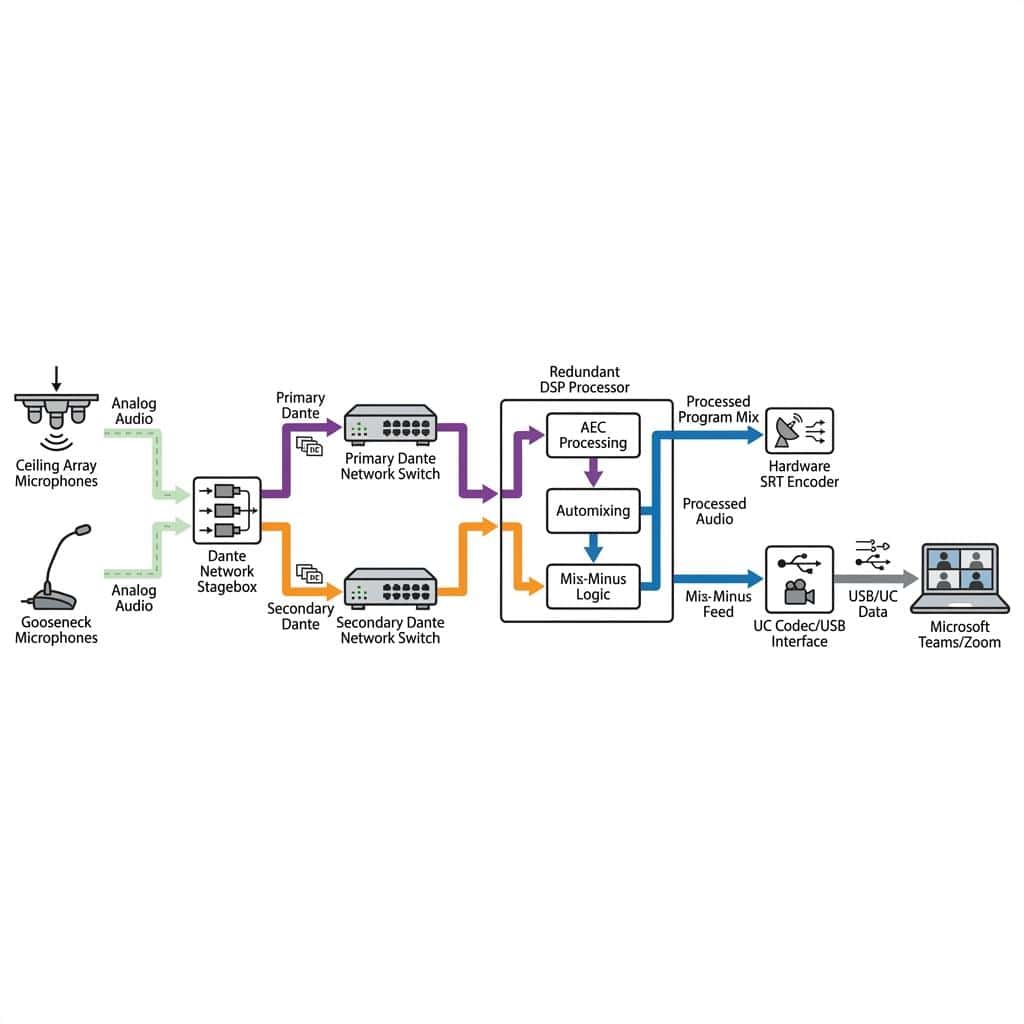

The DSP is the brain of the modern boardroom audio system. This dedicated hardware processes all incoming audio signals in real-time to prepare them for distribution. Several key functions are performed here. First is Acoustic Echo Cancellation (AEC), a non-negotiable requirement for any hybrid meeting. The DSP receives a reference feed of the audio being sent to the far-end participants (e.g., from a Zoom or Teams call). When this audio is played through the local room’s speakers, the AEC algorithm identifies it in the local microphone signals and subtracts it, preventing the remote participants from hearing a distracting echo of their own voices. Second, the DSP performs automixing. In a room with multiple open microphones, an automixer intelligently manages levels, attenuating microphones that are not being spoken into (gating) or balancing the total system gain (gain sharing). This dramatically reduces the buildup of ambient room noise and minimizes the risk of feedback. Finally, the DSP applies equalization (EQ) to tune the audio for speech intelligibility and dynamics processing like compression to control loudness variations, ensuring a consistent and clear signal for the final broadcast.

Audio-over-IP (AoIP) Networking: Dante and AES67

Legacy audio systems relied on point-to-point analog XLR cables, which are susceptible to electromagnetic interference and physical degradation. Modern professional installations utilize Audio-over-IP (AoIP) protocols, primarily Dante and AES67. Dante (Digital Audio Network Through Ethernet) allows for the transmission of hundreds of channels of uncompressed, low-latency digital audio over a standard Gigabit Ethernet network. This requires a properly configured managed network with Quality of Service (QoS) enabled to prioritize clocking and audio data packets over less critical network traffic. For security, Dante Domain Manager (DDM) software is essential. DDM provides a critical security layer, enabling user authentication, role-based access to specific audio channels, and AES67-compliant encryption for audio streams that traverse different network subnets. This prevents unauthorized interception or routing of sensitive audio on the corporate network. AES67 is an interoperability standard that allows audio to be shared between different AoIP ecosystems, ensuring future-proofing and compatibility.

Mix-Minus, Hybrid Integration, and Redundancy

The ultimate goal is seamless communication between all parties. This requires sophisticated routing to manage what each participant hears and implementing robust failover systems to guarantee 100% uptime during critical proceedings. Integrating this broadcast-level control with consumer-grade UC platforms presents a significant engineering challenge, which must be met with professional hardware and workflows.

Mastering the Mix-Minus for Hybrid Events

A mix-minus is one of the most crucial concepts in broadcast audio and is absolutely essential for hybrid events. It is a custom audio mix created specifically for a remote participant that includes the main program audio (the mix of all local microphones) MINUS their own voice. Without a mix-minus feed, a remote participant would hear their own voice returning to them on a slight delay, which is highly disorienting and makes communication impossible. In a professional DSP, a separate mix-minus is generated for each remote source. For example, if there are two remote participants on a Microsoft Teams call, the DSP will create two unique outputs. Output 1 contains the local room mix minus Participant 1’s audio. Output 2 contains the local room mix minus Participant 2’s audio. This ensures a clean, echo-free experience for all remote attendees.

Interfacing with Unified Communications (UC) Platforms

Getting professionally mixed audio into and out of platforms like Zoom or Microsoft Teams requires specific hardware interfaces. While these platforms are designed for simple USB webcams and headsets, they can accept high-quality audio sources. The most common method is to use a certified DSP that connects to the host PC via USB. The DSP presents the entire sophisticated microphone array and mix-minus system as a single, simple USB audio input and output device to the UC software. This bypasses the platform’s internal, and often inferior, audio processing. Devices from QSC, Biamp, and Shure offer certified solutions that ensure compatibility and high performance. For systems built entirely on Dante, a Dante AVIO USB Adapter can serve as a simple but effective bridge, converting Dante audio channels into a class-compliant USB connection.

Implementing Full Audio Redundancy

For a board of directors meeting, audio failure is not an option. Full redundancy must be engineered into the system architecture at every critical point. In a Dante-based system, this starts with a redundant network topology. A primary and a secondary network are built using physically separate cabling and network switches. All Dante-enabled devices (microphones, DSP, amplifiers) are connected to both networks. In the event of a primary switch failure or cable cut, the system seamlessly and instantly fails over to the secondary network with no loss of audio. Critical hardware, such as the core DSP, should be a model that supports a redundant, hot-swappable secondary unit. In the event of a processor failure, the secondary unit takes over the full processing load automatically. Finally, all core components, including network switches and DSPs, must be powered by Uninterruptible Power Supplies (UPS) and, where possible, use hardware with dual Power Supply Units (PSUs) to protect against both power grid failure and internal power supply failure.

Secure Transmission and Encoding for Remote Broadcast

The final stage of the signal chain involves securely delivering the final, mixed program audio to all intended recipients who are not on the primary UC platform, such as an overflow audience or executives viewing via a secure web portal. This is a broadcast workflow that demands secure, high-quality encoding and a reliable transport protocol designed for transmission over the public internet.

Selecting Secure Streaming Protocols

The choice of streaming protocol is critical for security and reliability. While RTMP (Real-Time Messaging Protocol) is still a common ingest protocol for many platforms, its security is limited. The encrypted version, RTMPS, wraps the stream in a TLS/SSL layer, but it lacks resilience against network packet loss. The modern professional standard for secure, reliable video and audio contribution over the internet is SRT (Secure Reliable Transport). SRT provides end-to-end AES-128 or AES-256 bit encryption, ensuring the contents of the stream cannot be deciphered if intercepted. Its key advantage is its sophisticated packet loss recovery mechanism based on ARQ (Automatic Repeat reQuest), which allows it to gracefully handle the network instability typical of public internet connections, preventing glitches and dropouts. For any sensitive corporate broadcast, SRT should be the default protocol for point-to-point transmission to the streaming server or platform.

Audio Encoding and Bitrate Management

Before transmission, the uncompressed PCM audio from the DSP must be compressed using an efficient codec. The industry standard for streaming is AAC (Advanced Audio Coding). For speech-critical applications, a bitrate of 128 kbps to 192 kbps for a stereo feed provides excellent quality that is virtually indistinguishable from the uncompressed source. The Opus codec is another excellent, low-latency choice often used in WebRTC applications. A professional hardware or software encoder will take the final audio feed, typically embedded within an SDI (Serial Digital Interface) video signal or as a discrete input, and encode it to these specifications for transport via SRT. This ensures that the pristine audio captured and processed in the room is delivered to the remote audience with its quality and security intact.

In conclusion, architecting audio for sensitive director meetings is a specialized discipline that rises to the level of broadcast engineering. It demands a holistic approach that considers the acoustic environment, a secure and redundant digital signal chain, sophisticated processing for hybrid integration, and encrypted transport for final delivery. By treating the boardroom not as an office, but as a broadcast studio, enterprises can ensure that their most critical communications are executed with absolute clarity, reliability, and confidentiality. Partnering with technical experts who specialize in these mission-critical B2B production environments is the only way to guarantee a successful outcome.