March 4, 2026 by Editor |

March 4, 2026 by Editor |

The transition from a physical stage to a digital podium has fundamentally altered the calculus of executive communication. For corporate event planners, AV professionals, and IT directors, a CEO’s virtual keynote is no longer a simple video conference. It is a high-stakes broadcast that reflects the stability, competence, and technological fluency of the entire enterprise. A pixelated video feed, unintelligible audio, or an abrupt stream failure can erode stakeholder confidence far more than a poorly delivered line. Achieving a state of broadcast-grade excellence requires a meticulous approach to production infrastructure, a deep understanding of streaming protocols, and a commitment to zero-failure engineering. This is not about applying consumer-grade tools to a corporate environment; it is about architecting a bespoke digital stage that empowers executive presence and guarantees flawless delivery to a global audience of employees, investors, and partners. The technical decisions made in the control room and the data center directly impact the authority and clarity of the message originating from the C-suite.

Signal Chain Architecture: From Lens to Local Area Network

The foundation of any professional broadcast is an uncompromisable signal chain. This chain begins at the point of capture, the camera and microphone, and extends through physical and networked transport to the production core. Each component is a critical link, and failure or poor specification at any point degrades the entire output. The goal is to preserve the highest possible signal integrity throughout the acquisition and routing process, delivering pristine audio and video to the encoding stage.

Camera, Sensor, and Optics Specification

The visual element begins with the camera system. While high-end webcams have improved, they lack the optical quality and signal control required for a professional production. The primary choice is between broadcast-style ENG (Electronic News Gathering) cameras and professional PTZ (Pan-Tilt-Zoom) systems. A broadcast camera with a Super 35mm or full-frame sensor and interchangeable lenses provides the ultimate creative control, offering shallow depth of field to isolate the speaker and superior low-light performance. For enterprise environments, high-end PTZ cameras with 1-inch CMOS sensors offer a compelling balance of quality and operational efficiency, allowing for remote control of camera position, zoom, and focus. The critical specification is the output signal. The system must support a professional, uncompressed baseband video format, typically via a 12G-SDI (Serial Digital Interface) connection capable of transmitting 4K/UHD (Ultra High Definition) video at 60 frames per second. HDMI 2.0 can serve as an alternative, but SDI offers longer cable runs and locking connectors essential for production environments.

Professional Audio Signal Flow

Audio is arguably more critical than video for message clarity. The standard for executive keynotes is a dual-microphone setup for redundancy. This typically involves two professional lavalier microphones, each connected to a separate wireless beltpack transmitter operating on diverse UHF frequencies to mitigate interference. These signals are received and fed into a professional audio mixer. Here, an audio engineer applies crucial processing: equalization (EQ) to shape vocal tone for clarity, compression to manage dynamic range and ensure consistent levels, and a noise gate to eliminate background noise. The final mixed audio, often referred to as the program audio, is then embedded into the SDI video signal using an audio embedder. This ensures perfect lip-sync and simplifies routing, as a single SDI cable now carries both video and multiple channels of audio.

Signal Transport and Routing: SDI, NDI, and Fiber

Once captured, these high-bitrate signals must be reliably transported to the production switcher. The industry standard remains SDI, a robust coaxial cable-based system defined by SMPTE (Society of Motion Picture and Television Engineers) standards. For longer distances within a facility, fiber optic converters are used to extend the SDI signal over kilometers without degradation. However, IP-based transport protocols are revolutionizing facility design. NewTek’s NDI (Network Device Interface) protocol allows for the transmission of high-quality, low-latency video and audio over a standard Gigabit Ethernet network. This simplifies cabling and allows for immense flexibility in sourcing feeds from across a corporate campus. A properly configured network with Quality of Service (QoS) policies is essential for NDI to function reliably, prioritizing video packets to prevent drops or stuttering.

The Production Core: Switching, Graphics, and Control

The production core is the central nervous system of the live stream, where all audio and video sources are managed, mixed, and composed into the final program feed. This environment, whether a physical control room or a cloud-based software solution, is where the technical director, graphics operator, and audio engineer orchestrate the live broadcast. It is the virtual green room and master control, responsible for every element the audience sees and hears.

Hardware vs. Software Video Switching

The heart of the production core is the video switcher. Hardware switchers, such as Blackmagic Design’s ATEM Constellation or Ross Video’s Carbonite series, offer dedicated processing and tactile control surfaces for maximum reliability and low latency, measured in single-digit milliseconds. They are the standard for high-stakes broadcast events. Software-based solutions like vMix or OBS Studio, running on powerful PC hardware with video capture cards, offer incredible flexibility and are potent tools for many enterprise applications. The choice depends on the scale and criticality of the event. For a CEO all-hands, a hardware switcher provides the operational assurance that a software solution, subject to operating system overhead, cannot always guarantee. The switcher is used to cut between different camera angles, integrate presentation slides, and roll in pre-recorded video packages.

Graphics, Titling, and Data Integration

Professional on-screen graphics are essential for reinforcing key messages and maintaining brand consistency. This includes lower-third titles identifying the speaker, full-screen graphics for data visualization, and branded motion graphics for transitions. These elements are typically generated on a dedicated graphics system, like Singular.live or Chyron, and fed into the production switcher as a key-and-fill signal or via NDI. This allows the graphics to be cleanly overlaid on top of the live video. ISO (isolated) recording of all camera feeds, including a clean program feed without graphics, is a best practice, providing maximum flexibility for post-event editing and repurposing.

Monitoring, Scopes, and Quality Assurance

You cannot manage what you cannot measure. A critical component of the production core is the multiview monitoring system, which displays all video sources, preview, and program feeds on a single large monitor. This gives the technical director complete situational awareness. Alongside the visual feeds, technical monitoring tools are essential. A waveform monitor is used to verify video exposure levels are within legal broadcast limits, preventing clipped whites or crushed blacks. A vectorscope visualizes color information, ensuring accurate color reproduction and consistent skin tones, a critical element for conveying executive presence. These tools ensure the outgoing signal adheres to strict technical standards before it ever reaches the encoder.

Contribution and Distribution: Protocols and Network Architecture

With a pristine program feed assembled, the next stage is to transport it from the production location to the distribution platform, a process known as contribution. This is the most vulnerable part of the entire chain, as it often relies on public internet infrastructure. The choice of transport protocol, encoding parameters, and network design is paramount for ensuring a stable and high-quality stream reaches the audience.

Encoding Standards: H.264 vs. H.265 (HEVC)

The program feed, an uncompressed video signal with a bitrate exceeding 12 Gbps for 4K, must be compressed for internet transport. This is the job of an encoder. The two dominant codecs are H.264 (AVC) and H.265 (HEVC). H.264 is the most widely supported standard, compatible with virtually all platforms. H.265 is its successor, offering approximately 40-50% greater compression efficiency at the same level of quality. For a 1080p stream, a high-quality H.264 encode might require 6-8 Mbps (megabits per second), while H.265 could achieve the same quality at 3-5 Mbps. This efficiency is critical for saving bandwidth and improving reliability over constrained networks. The encoder is configured with a bitrate ladder to provide adaptive bitrate streaming, allowing viewers with different network capacities to receive an optimal stream.

Transport Protocols: SRT vs. RTMP

The protocol wraps the encoded video for transport. For years, RTMP (Real-Time Messaging Protocol) was the standard. While still widely used for ingest by platforms, it struggles with network packet loss. The modern successor is SRT (Secure Reliable Transport). SRT is an open-source protocol that provides the reliability of TCP (Transmission Control Protocol) with the low latency of UDP (User Datagram Protocol). It excels at traversing congested networks by detecting and retransmitting lost packets, correcting for jitter, and securing the stream with AES-256 encryption. For a high-stakes executive keynote, contributing via SRT from the production site to the cloud streaming platform is the current industry best practice, dramatically improving resilience over RTMP.

Architecting for Resilience: Redundancy and Failover

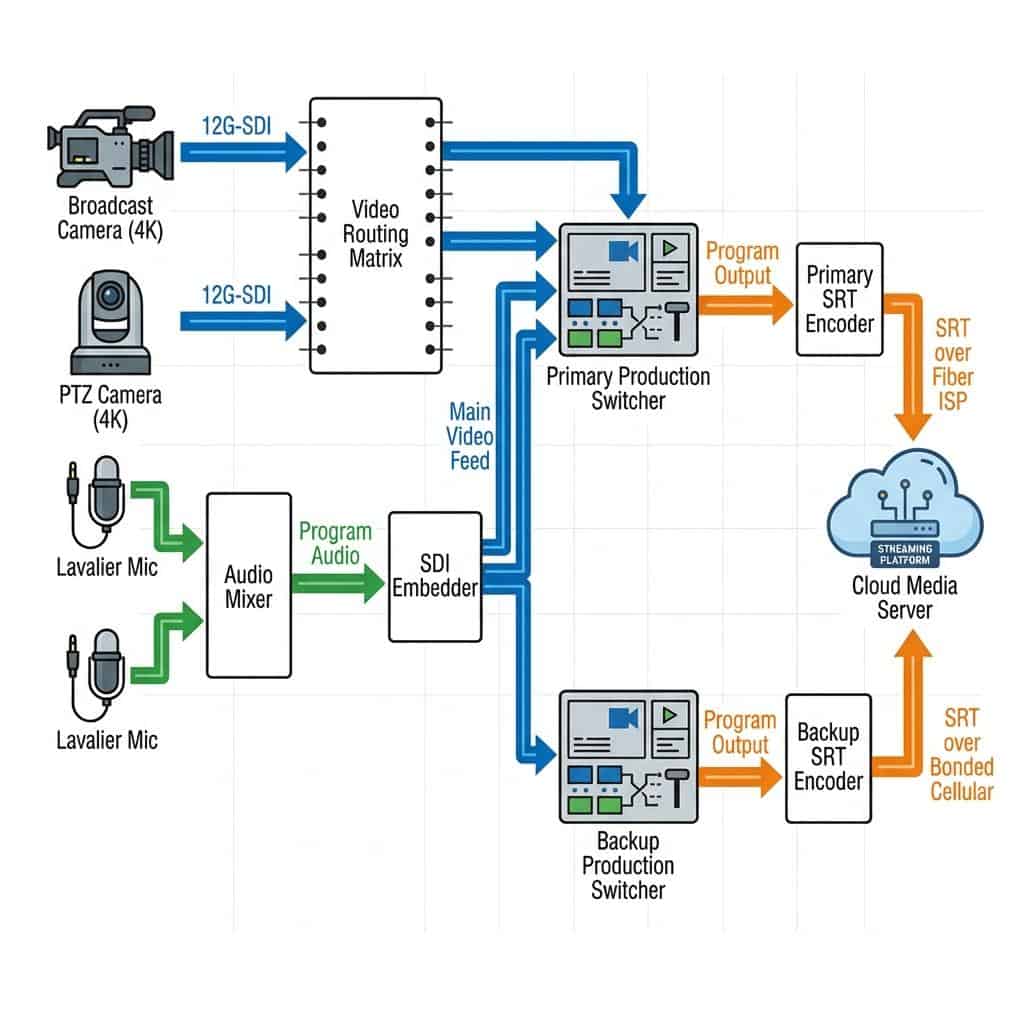

In a professional broadcast environment, there is no tolerance for failure. Every component and connection must have a backup plan. Architecting for resilience involves creating redundancy at every level of the signal chain, from the physical hardware in the room to the network paths used for contribution. The goal is a system that can withstand multiple component or network failures without any interruption to the end viewer.

Hardware and Source Redundancy

On-site resilience begins with the hardware. Key equipment like the production switcher and primary encoder should have redundant power supplies connected to separate power circuits. As mentioned, the CEO should be miked with two separate lavalier systems. A hot-spare encoder should be configured with the exact same settings as the primary, ready to take over instantly. This level of redundancy ensures that a single hardware failure does not result in a catastrophic loss of the broadcast.

Network Path Diversity

The single greatest point of failure for most streams is the internet connection. To mitigate this, a dual-path contribution strategy is non-negotiable for critical events. This involves using two completely separate network providers. The primary path could be a dedicated enterprise fiber connection, while the secondary path might be a high-speed business cable or a bonded cellular solution that combines multiple cellular carriers (e.g., AT&T, Verizon, T-Mobile) into a single, highly reliable connection. Two separate encoders feed identical SRT streams over these diverse paths to the cloud media server. If the primary fiber path experiences congestion or an outage, the streaming platform can instantly and seamlessly switch to the secondary feed with no disruption to the viewer.