March 24, 2026 by Editor |

March 24, 2026 by Editor |

The traditional annual report, a document defined by static text and retrospective data, is undergoing a fundamental transformation. For decades, it has served as a one-way, asynchronous communication channel. In the modern enterprise environment, where stakeholder engagement and data immediacy are paramount, this legacy approach is no longer sufficient. The future of stakeholder communication lies in converting the annual report from a static document into a dynamic, interactive, and secure live video event. This evolution presents significant technical challenges that demand broadcast-grade infrastructure, a deep understanding of video transport protocols, and a robust hybrid event strategy. Successfully executing a live-streamed annual report is not a simple webinar; it is a mission-critical B2B broadcast that requires engineering precision to ensure security, reliability, and a seamless experience for a global audience of investors, analysts, and employees.

Architecting the Core Production Infrastructure for High-Stakes Financial Broadcasting

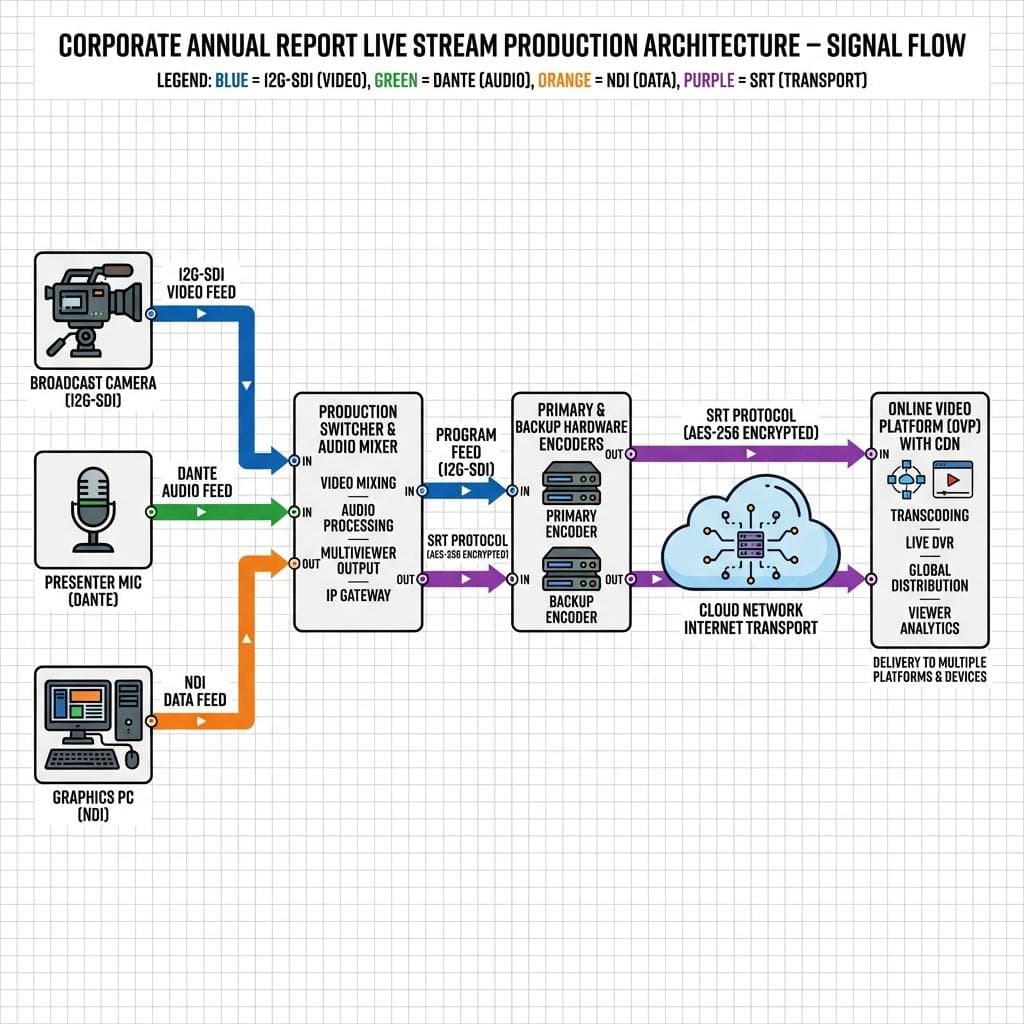

The foundation of a successful live video annual report is the production infrastructure. This is not a scenario for webcams and basic software encoders. It requires a meticulously planned hardware ecosystem capable of managing multiple high-quality video and audio sources with zero tolerance for failure. The architecture must be designed for both technical resilience and production flexibility, allowing for a polished, network-television-quality output that reflects the gravity of the financial information being presented.

Signal Acquisition and Ingest: Beyond the Webcam

Professional signal acquisition begins with broadcast-quality cameras, typically Super 35mm or full-frame sensor cameras connected via Serial Digital Interface (SDI). Using 12G-SDI connections allows for uncompressed 4K UHD (3840×2160) video signals at up to 60 frames per second over a single coaxial cable, providing pristine image quality and reliability over long cable runs within a studio or event venue. This is technically superior to HDMI, which is prone to signal degradation and lacks the secure locking connectors of BNC. Audio acquisition requires a similar professional approach. Presenter microphones, typically lavalier or shotgun mics, are fed into a dedicated audio mixing console that can process and route audio via analog XLR or digital audio-over-IP protocols like Dante. The final mixed audio is then embedded into the SDI video signal path, ensuring perfect lip-sync and a simplified signal chain downstream. Presentation assets, such as financial charts and pre-produced video packages, are ingested from dedicated graphics computers, often outputting via SDI or NDI (Network Device Interface), a high-quality, low-latency video-over-IP protocol ideal for local network transport.

The Production Control Hub: Switching, Routing, and Monitoring

All incoming signals converge at the production control hub, which is the operational core of the live event. A production switcher, or video mixer, is used to cut, mix, and apply effects to the various video sources in real-time. This is where the technical director (TD) builds the program feed that the audience sees. For enterprise-grade events, this involves complex operations like picture-in-picture compositions for panel discussions and lower-third graphics for speaker identification. Signal routing is managed by an SDI matrix router, which provides the flexibility to send any source to any destination. This is critical for creating custom feeds for on-stage confidence monitors, teleprompters, and the essential multiview monitors. The multiview allows the production crew to see all sources simultaneously, along with Program and Preview outputs, audio level meters, and signal status. A crucial component of this hub is ISO (isolated) recording. Each camera feed and the final program output are recorded to separate, timecode-synced files. This provides full redundancy and allows for post-event re-editing or the creation of on-demand assets with different camera angles.

Enterprise-Grade Encoding and Transport Protocols for Secure, Low-Latency Delivery

Once the program feed is produced, it must be encoded and transported from the event location to the distribution platform with maximum reliability and security. The choice of encoding parameters and transport protocol directly impacts the quality, latency, and stability of the stream that reaches stakeholders. This stage of the signal chain is a common point of failure in less professional setups, making a robust strategy essential for mission-critical broadcasts like an annual report.

Codec Selection and Bitrate Management: H.264 vs. H.265 (HEVC)

The two primary codecs for live streaming are H.264 (AVC) and H.265 (HEVC). H.264 offers the widest compatibility across devices and platforms. H.265, its successor, provides significantly better compression efficiency, delivering the same video quality at roughly half the bitrate. For a 4K UHD stream, using H.265 can reduce the required bandwidth from 20-25 Mbps to 10-15 Mbps, a critical advantage on networks with limited egress capacity. Bitrate management is equally important. While variable bitrate (VBR) is suitable for on-demand files, constant bitrate (CBR) is the professional standard for live streaming. CBR ensures a predictable and stable data flow, preventing buffering issues caused by sudden spikes in data complexity. A typical 1080p60 stream is encoded at a CBR of 6-8 Mbps, while a 4K30 stream requires 15-20 Mbps using H.264. Hardware encoders are strongly recommended over software solutions for their stability and dedicated processing power, which eliminates the risk of CPU overload on a production machine.

Transport Protocol Deep Dive: From RTMP to SRT

The protocol used to transport the encoded video is the conduit to your audience. For years, RTMP (Real-Time Messaging Protocol) was the standard. However, it is a legacy TCP-based protocol that performs poorly on networks with packet loss or fluctuating latency. The modern professional standard is SRT (Secure Reliable Transport). SRT is an open-source protocol that combines the reliability of TCP-based error correction with the low latency of UDP. It excels at delivering high-quality video over unpredictable public networks, featuring AES 128/256-bit encryption for security and sophisticated packet re-transmission mechanisms to mitigate packet loss. This makes SRT the definitive choice for transporting a high-value broadcast feed from the production site to the cloud ingest point. Internally, NDI is used to move video between devices on the local network, but SRT is the correct protocol for site-to-cloud contribution.

Building a Resilient Hybrid Event Platform for Global Stakeholder Access

A modern annual report must cater to both a physically present executive audience and a global virtual audience. This hybrid model requires an architecture that can seamlessly serve both groups while integrating with existing enterprise communication platforms and ensuring flawless global delivery. The key principles are platform integration and absolute redundancy for every component in the delivery chain.

Integrating with Enterprise UC Platforms and CDNs

For internal stakeholders participating interactively, the broadcast feed must be integrated into Unified Communications (UC) platforms like Microsoft Teams, Zoom, or Webex. This can be achieved using features like Teams’ RTMP-In functionality or Zoom’s direct integration with professional hardware. This allows employees to view the broadcast within a familiar environment. For large internal audiences, an Enterprise Content Delivery Network (ECDN) is deployed to manage video traffic efficiently within the corporate network, preventing WAN saturation. For external investors and the public, the SRT feed is sent to a professional Online Video Platform (OVP). An OVP provides a secure, customizable video player, detailed analytics, and massive scalability through a global Content Delivery Network (CDN). The CDN caches the video at edge servers around the world, ensuring a low-latency, high-quality viewing experience for stakeholders regardless of their geographic location.

Redundancy and Failover Architecture for Zero-Downtime Events

For a financial broadcast, there is no room for downtime. A resilient architecture employs a 1+1 or N+1 redundancy model for all critical components. This means running a primary and a fully independent backup encoder, each receiving the same program feed. These encoders should use network path diversity, sending their streams over two different internet connections; for example, a primary dedicated fiber line and a backup cellular bonding solution. This ensures that a failure of a single piece of hardware or an entire ISP network does not interrupt the broadcast. At the OVP or CDN level, seamless failover is configured. If the primary SRT feed is lost for even a few seconds, the platform automatically and instantly switches to the backup feed, a transition that is completely invisible to the end-user. This level of resilience extends to power, with Uninterruptible Power Supplies (UPS) and, ideally, diverse power circuits for all production equipment.

Enabling Stakeholder Interaction: Q&A, Polling, and Data Integration

The primary advantage of a live video annual report is the capacity for real-time interaction. This requires technical workflows that can ingest audience participation and integrate it directly into the live broadcast in a controlled and professional manner, transforming passive viewing into active engagement.

Implementing Moderated Q&A and Real-Time Polling

Interactive elements like Q&A and polling are managed through the OVP or a third-party integration like Slido. Audience members submit questions through an interface embedded alongside the video player. These questions are routed to a moderation dashboard, where a communications manager can approve, queue, and organize them. The approved questions are then fed digitally to a display visible to the on-camera executives, such as a confidence monitor or teleprompter, allowing for a fluid and natural Q&A segment. Polling works similarly, with results being collected in real-time. These results can be integrated back into the production as a data source for a graphics engine, allowing for the instantaneous display of broadcast-quality charts and graphs illustrating stakeholder sentiment on-screen.

Secure Data Visualization and API Integrations

Displaying sensitive financial data during a live broadcast requires more than a simple screen share. For a truly professional look, dedicated broadcast graphics systems are used. These systems can ingest real-time data from secure APIs, databases, or even spreadsheets to populate pre-built graphical templates. This ensures that the financial figures, charts, and legal safe harbor statements displayed are not only accurate and dynamically updatable but are also rendered with the polished aesthetic of a major financial news broadcast. This API-driven workflow minimizes the chance of human error in data entry and allows for the presentation of complex information in a clear, visually compelling format.

In conclusion, transforming the annual report into an interactive live video event is a complex technical undertaking that elevates stakeholder communication to a new standard. It requires a strategic investment in broadcast-grade production infrastructure, a sophisticated understanding of secure video transport protocols like SRT, and a resilient, redundant delivery architecture. By integrating interactive elements and secure data visualization, companies can deliver a more transparent, engaging, and impactful financial narrative. Executing this requires a partner with deep expertise in B2B event streaming and hybrid production, capable of engineering a solution that guarantees a flawless and secure experience for all stakeholders.