March 7, 2026 by Editor |

March 7, 2026 by Editor |

The Core Challenge: Geopolitical Hub vs. Geophysical Reality

Executing a multi-city corporate town hall from a Singapore base presents a unique and formidable set of technical challenges. As a global business hub, Singapore is an ideal strategic location for corporate headquarters. However, its geographical position introduces significant geophysical latency when streaming real-time video to key financial centers in Europe and North America. For a high-stakes, C-suite address where every frame and syllable matters, managing this latency is not an IT problem; it is a core production engineering mandate. The success of the event hinges on delivering a synchronized, high-quality, and interactive experience to a geographically dispersed audience, where a delay of even a few seconds can disrupt engagement and undermine the message. This requires a robust architecture that moves beyond basic webcasting protocols and embraces broadcast-grade solutions designed for long-haul signal transport.

The fundamental issue is the physics of data transmission. The round-trip time (RTT) for data packets between Singapore and London is approximately 160-200 milliseconds, and to New York, it can exceed 250ms under ideal fiber-optic conditions. When using standard transmission protocols like Real-Time Messaging Protocol (RTMP), which is built on the Transmission Control Protocol (TCP), this distance creates a vulnerability. TCP’s packet acknowledgment and retransmission mechanism, while ensuring data integrity, is ill-suited for real-time video over high-latency networks. A single dropped packet can stall the entire stream, causing buffering and unacceptable viewing disruptions. Therefore, a successful multi-city town hall requires a purpose-built workflow that addresses latency at every stage: from initial photon capture in the Singapore studio to final pixel rendering on a screen in a New York boardroom.

Architecting a Resilient Contribution and Distribution Network

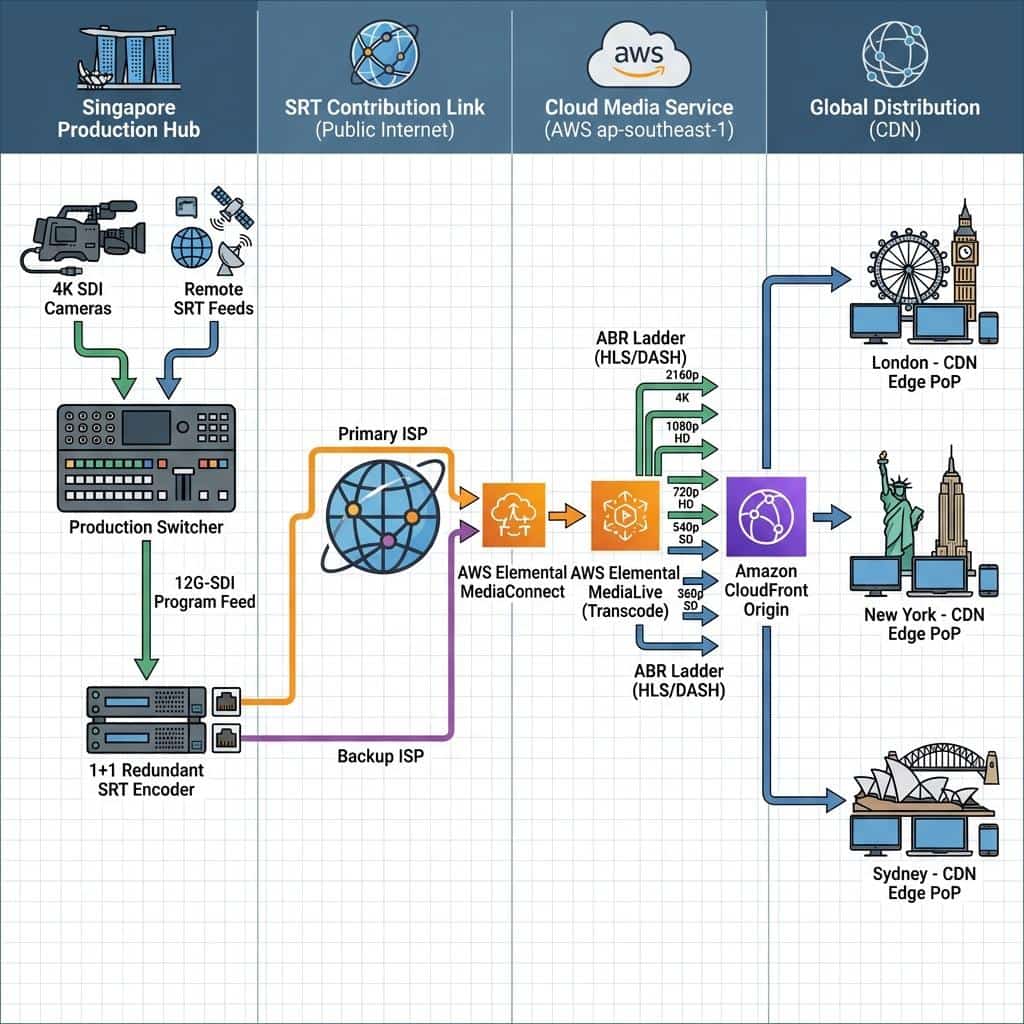

The solution lies in a multi-layered strategy that decouples the contribution feed from the distribution network. The primary goal is to transport a pristine, high-bitrate mezzanine feed from the Singapore production hub to a cloud processing region with minimal latency and maximum reliability. From there, the signal is transcoded and distributed globally via a Content Delivery Network (CDN). This approach contains the most significant latency challenge, the long-haul link, to a single, managed point-to-point connection, allowing the CDN to handle the final, shorter-distance delivery to regional audiences efficiently.

This architecture is fundamentally different from a direct-to-CDN approach using RTMP. It treats the connection between the event venue and the cloud as a broadcast-level contribution link, prioritizing stability and quality over all else. The protocol of choice for this critical link is Secure Reliable Transport (SRT). Developed to handle the rigors of transmission over unpredictable public internet connections, SRT leverages a User Datagram Protocol (UDP) base with an intelligent packet recovery mechanism known as Automatic Repeat Request (ARQ). Unlike TCP, SRT’s ARQ allows for faster retransmission of only the lost packets without halting the stream, making it exceptionally resilient to the packet loss inherent in long-distance internet travel. This ensures the cloud ingest point receives a stable and continuous feed, which is the foundation for a successful global broadcast.

The Singapore Production Hub: A Zero-Compromise Signal Chain

The integrity of the entire global stream originates from the technical execution within the Singapore-based production control room. This environment must be engineered to broadcast standards, ensuring that every component in the signal chain, from cameras to the final contribution encoder, is optimized for quality and reliability. Any compromise here will be magnified across the global distribution network.

Baseband Signal Management and Processing

For a flagship corporate event, the internal production standard should be baseband video, typically running on a 12G-Serial Digital Interface (SDI) infrastructure to support uncompressed 4K UHD (3840×2160) signals at 50/59.94 frames per second. All primary sources, including professional studio cameras, presentation laptops with scan converters, and video playback servers, should feed into a central routing matrix. This router, such as a Ross Ultrix or Blackmagic Design Videohub, acts as the heart of the facility, allowing any source to be sent to any destination. The program feed is cut on a production switcher (e.g., a Grass Valley Kula or a NewTek TriCaster 2 Elite) by a technical director. Audio is managed separately, with all microphone and source audio being de-embedded, mixed on a professional audio console like a Yamaha QL series, and then re-embedded into the final 12G-SDI program output to ensure perfect lip-sync. This meticulous management of audio and video as separate but synchronized streams is critical for professional quality.

Hybrid Integration: Managing Remote Contributors

A modern town hall often involves remote speakers from the very cities the stream is targeting. Integrating these remote participants requires a robust return feed and communication architecture. Simply using a consumer-grade video conferencing platform is unacceptable due to low bitrates, variable frame rates, and lack of professional signal control. A superior workflow involves using the same SRT protocol for remote contribution. Each remote speaker can be equipped with a small hardware encoder or use a software solution to send a high-quality feed back to the Singapore hub. In the control room, these SRT feeds are received by hardware decoders that convert them back to baseband SDI for seamless integration into the production switcher. Crucially, a custom mix-minus audio feed must be generated for each remote participant. This feed contains the full program audio minus their own microphone, preventing echo and feedback. A dedicated talkback system, such as a Riedel Bolero or Clear-Com system, provides off-air communication between the director and all participants, both local and remote.

Contribution Encoding: The Gateway to the Cloud

The final program feed from the production switcher, complete with embedded audio and graphics, is sent to the contribution encoders. For a mission-critical event, a redundant 1+1 encoder setup is non-negotiable. These are typically high-performance hardware encoders, such as the Haivision Makito X4 or an AWS Elemental Live appliance. The primary encoder is configured to send an SRT stream over the primary internet service provider (ISP), while the secondary encoder sends an identical stream over a separate, diverse-path ISP. The encoders should be configured for H.265 (HEVC) compression to maximize bandwidth efficiency. A typical setting for a 4K UHD contribution feed would be a constant bitrate of 20-30 Mbps. The SRT settings must be carefully tuned, with latency configured to be at least 4 times the RTT to the cloud ingest point. For a Singapore-to-US East connection (approx. 250ms RTT), this means an SRT latency setting of at least 1000-1200ms to build a sufficient buffer for packet recovery.

Cloud Architecture and Global Distribution

Once the SRT feeds leave the Singapore venue, they are ingested by a cloud media service. The choice of ingest location is critical; it should be the cloud region with the lowest and most stable latency to Singapore, which is typically the AWS ap-southeast-1 (Singapore) or a nearby equivalent. This minimizes the length of the vulnerable public internet leg of the journey.

Ingest, Transcoding, and Packaging

Within the cloud, a service like AWS Elemental MediaConnect is ideal for managing the dual SRT inputs. It can be configured for seamless failover, automatically switching to the secondary stream if the primary feed is interrupted. From MediaConnect, the pristine mezzanine signal is passed to a transcoding service like AWS Elemental MediaLive. Here, the high-bitrate H.265 feed is converted into a multi-bitrate H.264 adaptive bitrate (ABR) ladder. This ABR ladder might include renditions from 1080p at 5 Mbps down to 360p at 800 kbps. This ensures that viewers with varying internet speeds can receive a continuous stream without buffering. The transcoder then packages the ABR streams into standard delivery formats like HTTP Live Streaming (HLS) and Dynamic Adaptive Streaming over HTTP (DASH). These segment-based formats are what allow modern video players to switch between bitrates seamlessly.

CDN and Final Delivery

The final link in the chain is the CDN. The HLS and DASH manifests are served from a cloud origin server (like Amazon S3) and distributed through a global CDN such as Amazon CloudFront or Akamai. The CDN caches the video segments at its Points of Presence (PoPs) or edge locations around the world. When a viewer in London requests the stream, they pull the video segments from a nearby London edge server, not all the way from the Singapore origin. This drastically reduces last-mile latency and improves the quality of experience. The total end-to-end latency for a viewer in this architecture, from the Singapore camera to their screen in London, will typically be in the range of 30-45 seconds. While not instantaneous, this latency is predictable and stable, which is essential for managing interactive elements like polling and moderated Q&A.

Conclusion: Engineering Predictability in High-Stakes Environments

Managing multi-city latency for a corporate town hall from Singapore is an exercise in broadcast engineering and network architecture. It requires a strategic shift away from simple webcasting tools toward a resilient, component-based workflow that controls every step of the signal chain. By combining a zero-compromise baseband production hub, a robust SRT contribution link, and an intelligent cloud-based transcoding and distribution architecture, enterprises can overcome the inherent challenges of geographical distance. This approach transforms the unpredictable public internet into a reliable contribution path, ensuring that C-suite communications are delivered with the clarity, stability, and professionalism they demand. The result is a seamless global event that connects leadership with employees across continents, where the technology becomes transparent and only the message remains. For corporate event planners and IT directors, partnering with a production team that possesses deep expertise in these workflows is paramount to mitigating risk and achieving a successful global town hall.